Our Technology

State Space Model networks, advanced quantization aware training and innovative compiler technology converge to create a breakthrough in power-efficient computation. This unique approach enables AI-driven applications to achieve exceptional performance while maintaining low power consumption, redefining what's possible in edge computing.

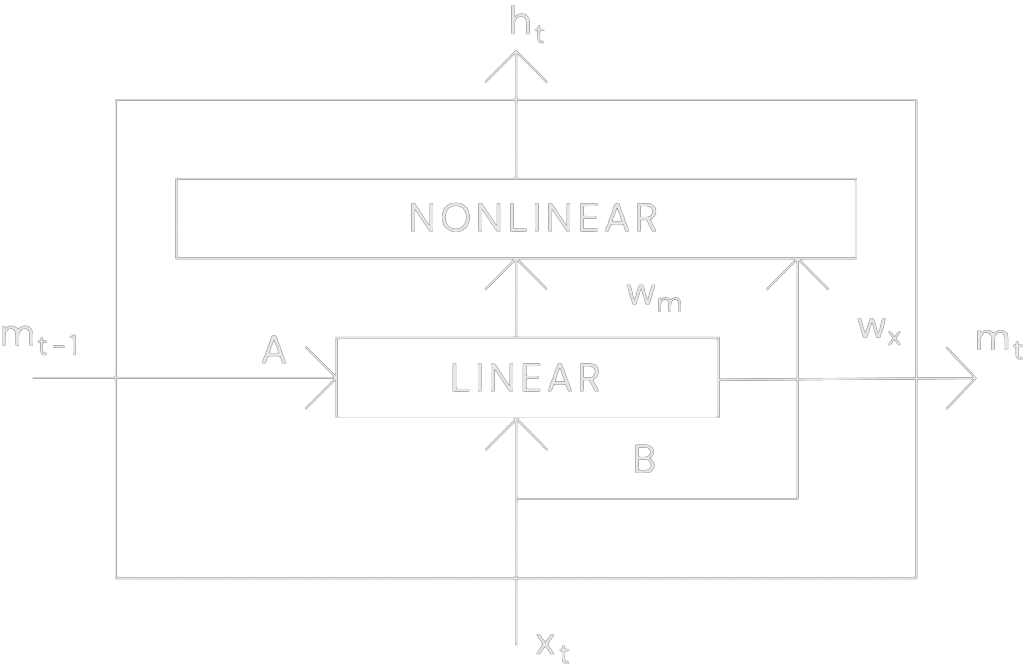

State Space Models

The Legendre Memory Unit (LMU) is ABR’s patented breakthrough that helped define State Space Neural Networks. ABR identified early that SSMs were ideal for edge devices, enabling best-in-class voice and DSP models and setting the stage for low-power agentic AI.

Game Changing Technology

The TSP1 combines state-space neural networks, optimized hardware and intelligent power management. Supported by our training and compiler tools, it delivers a complete and highly efficient AI processing solution.

First patented state-space network

"Legendre Memory Unit" is the first in a new class of state space networks that are far more computationally efficient than other popular network architectures.

Better scaling for large models

The TSP1 excels in scaling for large AI models, maintaining robust performance and speed, ideal for centering applications like deep learning and advanced analytics.

State-of-the-art benchmarks

Achieving state-of-the-art benchmarks, the TSP1 demonstrates top-tier performance in AI and machine learning tasks, meeting the highest industry standards.

Optimized TSP hardware

The TSP1's custom optimized hardware is tailored for time series processing, delivering peak performance and efficiency, perfect for edge computing with minimal power consumption.

Data efficiency

The TSP1 chip delivers exceptional data efficiency, processing complex AI tasks with under 10mW of power. An ideal choice for energy-conscious AI applications.

Natural Language Interfaces

Supporting advanced natural language interfaces, the TSP1 enables seamless interaction with AI devices, perfect for AR/VR, smart home and smart medical devices.

Get in touch

ABR is a leader in AI chip innovation, with patented technologies and groundbreaking research that set us apart from the competition.